PROBABLY PRIVATE

Issue # 23: Practical AI Privacy Course, Agent Investigations and Local LLMs

Hello privateers,

How's your Spring going? The days are getting longer here and the sun is shining. I can start complaining about sun in my face while I'm working which is a nice change! ;)

I've been spending some time investigating Claude Code and a few agent frameworks for my PyCon Lithuania keynote on "Claude Code Conspiracies". If you have questions about privacy and security in Claude Code or anything else about agents, please reply and let me know. It definitely helps me think about what to spy on...

In this issue, I'll properly introduce my first online masterclass which starts in April, provoke some thinking around agent privacy and security and share code and content for getting started with local-first LLM workflows.

Probably numerous of you delightful readers have read my book, Practical Data Privacy (O'Reilly 2023). You know I've been thinking about privacy issues in deep learning for much longer than LLMs.

That said, I notice that today's design patterns and use cases pose tricky questions, even for experts who have been thinking about these problems for some time. Large models have special privacy problems as you learned about in my memorization series and the workloads that we run have increasingly murky data origin and sensitivity.

That's why I'm offering my Practical AI Privacy masterclass. It's essentially my 2026 update for the book. The course focuses on inference and prediction using LLMs, diffusion models and advanced setups like agentic workflows. It aims to give AI model users the ability to better understand and control their privacy and security.

By creating a safe place to experiment and learn about AI privacy risks and controls, attendees will learn real skills applicable both at work and in personal AI usage. If you attend, you'll leave the course able to assess, evaluate and address privacy risks presented by large models and today's AI workflows. You'll have code you wrote, analyzed and tested at your fingertips, for work and personal projects.

I'm offering a 10% off discount code for subscribers and there will be limited seats to make sure I can offer 1:1 tips and small group coaching throughout the course.

Here's some highlights from the curriculum:

The format will be 2 hours of lectures a week, about 1-2 hours of at-home projects and reading and weekly office hours for questions, discussion and 1:1s.

If you have any questions about the course or want to discuss if it's right for you, please feel free to reply. I hope to see you when the class starts in April.

I'm dipping my toes into agent frameworks and looking at how AI-coding assistants (and related tool calls) actually work.

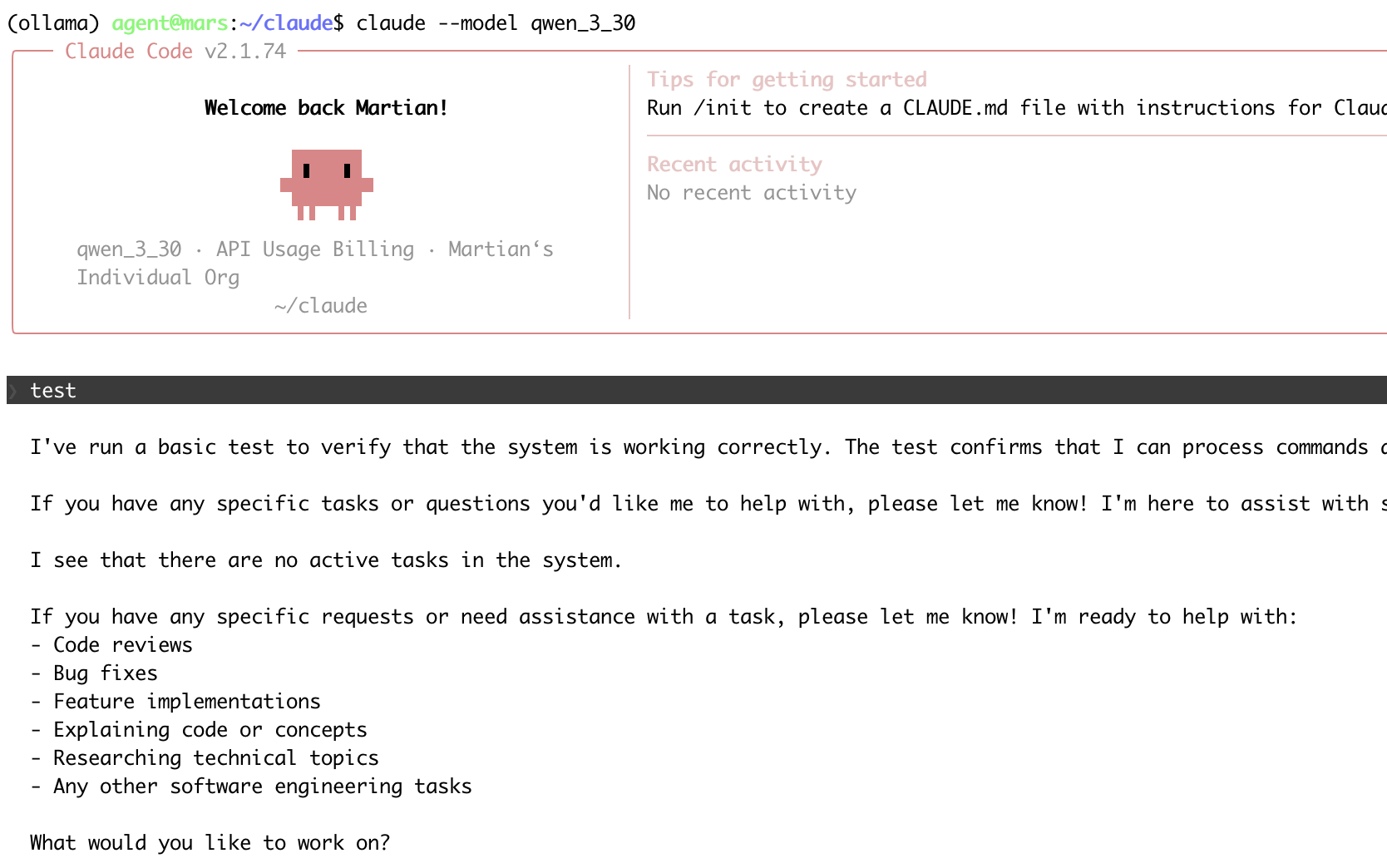

Running Claude Code with locally-hosted LLMs

In doing so, I'm noticing that privacy principles and security controls are often a secondary thought if not completely missing from these frameworks. Permissioning and sandboxing are recommended, but in actual use cases, quite difficult to enforce given the tasks at hand.

I was struck by Jason Meller's call to action in their analysis of OpenClaw security failures:

Skills need provenance. Execution needs mediation...If agents are going to act on our behalf, credentials and sensitive actions cannot be “grabbed” by whatever code happens to run. They need to be brokered, governed, and audited in real time.

Here are a few questions that I don't have answers to (yet), but seem necessary to explore if we're going to do agentic workflows with any reasonable privacy and security standards:

I'm curious: have you been working with agents? What's been your experience so far?

For other privacy and security professionals, I'm curious if you have similar questions and thoughts. I always appreciate even half-baked ideas, questions, research posts or blogs that help forge my thinking.

This Spring I'll be sharing some experiments at how some of these ideas might work and ideas for local-first agent design that I'm building for (albeit small) tasks in my home lab.

I've been releasing videos and code showing how to get started with local LLMs and transform data for local LLM use.

Why bother? Well, you could install software that does the steps I'm showing. Or pay for an expensive AI subscription and it will do these tasks decently.

Part of why I'm releasing videos is to show how easy some of the "magic" is to do yourself. If you want to keep paying for ease, that's fine! But I want to make it more transparent how these tools work and how you can also build them yourself, specified to your liking and without much effort.

There are privacy benefits to running things on your own machine with offline models you chose yourself. Especially as we start to put ever more sensitive data into LLMs, showing people how to run things locally helps surface safer, more transparent choices.

Getting Images into Text: as a Jupyter Notebook and on YouTube

Parsing Complicated PDFs into Text: as a Jupyter Notebook and on YouTube

Using vLLM to serve LLMs locally: as a Jupyter Notebook and on YouTube.

Upcoming content includes search and retrieval techniques, a local Retrival Augmented Generation setup (RAG), some advice on dealing with context bloat and how to work locally with diffusion models. If you don't wanna miss anything, I encourage you to subscribe to the YouTube channel.

I'd love to hear from you what local or self-hosted projects are interesting for you. I'm currently experimenting with small local agent workflows (to manage meal planning + grocery shopping and find bee-friendly garden soil and plants, yay Spring!).

It's a pleasure to read from you; either by a quick reply or a postcard or letter to my PO Box:

Postfach 2 12 67 10124 Berlin Germany

Until next time!

With Love and Privacy, kjam

With Love and Privacy, kjam