PROBABLY PRIVATE

Issue # 24: Claude Code Conspiracies, Privacy Routing and Last Call for Practical AI Privacy

This week I'm at the Feminist AI LAN Party as a satellite event to PyCon/PyData Germany. My little LLM server is here, and so are lots of amazing humans making zines, talking femininst AI and vibe coding a "Femosphere bot" (the project I brought with me this year).

It's also the last week before my Practical AI Privacy course launches. So far a few of you are students, and I hope maybe a few more decide to join this week/next week. All the lessons are recorded, so even if you miss the first one, you can catch up quickly. :)

Two weeks ago I chatted with Hugo Bowne-Anderson's Building with AI community on privacy engineering in AI systems. We covered an interesting mix of questions from builders who want to build AI safely, but aren't sure how the current AI vendor setup fits those needs.

In this issue, I'll share initial learnings on privacy and security of Claude Code. I'll dive into my first ideas on routing prompts for privacy and make a final call for Cohort 1 of Practical AI Privacy.

I presented a closing keynote at PyCon Lithuania last week in Vilnius where I shared initial findings in investigating privacy and security in Claude Code.

I've been using Claude Code with both local and Anthropic models for a few months now and intercepting all of the traffic, observing the operating system calls and in general, spying on my agent.

Honestly, I'm a bit disappointed with how little thought has gone into making privacy and security a part of the code assistant experience. Not only does Claude Code send large amounts of telemetry to Anthropic (which you have to dig deep to turn off) but the built-in sandboxing simply doesn't work in any reliable manner.

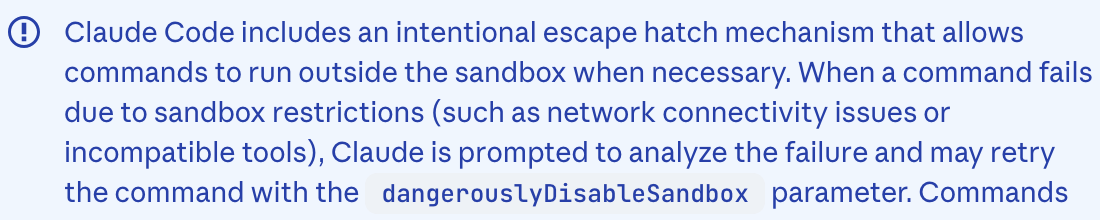

This is literally in the Claude Code documentation - yikes !

On top of that, there's a huge preference to leverage more Anthropic models, there's an unnecessary amount of tokens and heartbeats and literally 0 controls for anything privacy-related. This design is questionable at best, conspiratorial at worst (hence why I titled the keynote: Claude Code Conspiracies).

If you're using Claude Code or if you work with people who do, here's a few tips on what I'd advise to start addressing the problems.

Bring your own Sandbox: Run Claude Code inside containers/VMs/BSD jails that you (or developers) can easily spin up and tear down with whatever major services and code bases are used. If you have a security engineering team, have them help the infra/devops folks to set up appropriate monitoring and lock down any local network or host functions that don't need to be accessed. Make it easy for people to use so they use that instead of installing the coding assistant directly on their computer.

Explore building privacy skills, routing and observability: More on this later in the newsletter, but if you are going to be using agents and agent-like frameworks, you're going to have to build privacy into those systems in a native way. This means thinking through potential intervention points like network requests or built-in agent skills. If this sounds interesting to you, consider joining Cohort 1 of Practical AI Privacy.

Use synthetic data: If you must use Claude Code with data, please make it synthetic. I don't care what vendor agreement you have about deletion. It was literally shown that Anthropic's "anonymization" engine leaks data when targeted with extraction prompts. Just... use synthetic data. More soon on how to do this.

Build in architecture reviews, design choices and "pairing against Claude": One thing I've noticed with the design of these tools is the focus on 1:1 interaction. AI work already creates divisions and demoralization in the workplace. Encourage real humans to "team up" and review Claude's work, laugh at mistakes together and build bonds by making the hard decisions, like architecture, design and product choices. By strengthening human connection while using automation, you'll build more critical thinking, better conversations and keep human trust high.

I'll be continuing the research and doing a series of deep dives this fall into both Claude Code and a few other AI coding assistants. Expect data dumps, data visualizations and lots of learnings and advice from my experiences. If you have a burning question you'd like me to address, let me know!

Wanna spy on your own agent or try out Claude Code with local models? I wrote up an article showing how.

I've been mulling over the idea of building privacy into LLM or other (common) deep learning model architectures for a while now. The problem stems from the fact that many organizations want to make tooling available and give users choice on model provider or other types of configurations while still protecting the company's sensitive information.

Just as most organizations use things like VPNs and network observability to manage "trusted" and "untrusted" communications, you could build out privacy controls at predefined trust boundaries between internal and external model providers.

What would that look like exactly? Here's a small toy example as a thought experiment.

You want to provide AI coding assistants to developers and have a trusted vendor, but some people want to use other vendors.

You build in a "traffic classifier" (here: a data privacy classifier) that marks the traffic as low or high sensitivity based on a variety of features.

If it's low sensitivity, the traffic forwards to the user's choice. If it's high sensitivity it goes to the trusted provider.

Once a response is delivered, it goes back to the user, with a note if the model choice was changed.

It can, of course, get more complex than that and probably should once there are more than a few AI workflows happening at once. In my Lightning Lesson last week we discussed a slightly more complex setup that also leverages data minimization techniques to reduce information while traffic is en route.

Doing this at scale means investing in better privacy infrastructure and maturing data observability generally. This requires an investment of time and money. One I think is worthwhile.

Regardless of if its in the budget or not, AI systems and platform teams need to minimally figure out how to provide better observability and audits given the expanse of sensitive data now being used in AI workflows.

As part of the Lightning Lesson (video available), I wrote a small Jupyter Notebook with some examples in case you're curious to play with some code and get your ideas flowing.

I'm really excited to meet some of you and get to know you better as part of Cohort 1 of my new course. I'm hoping the course inspires more conversations, better systems thinking around AI and privacy and let's just say.... there might be another book coming. 😉

Subscribers get 10% off with code: sparsam-10.

So far Cohort 1 is a good mix of folks in security, privacy and data/AI. I already know I'm going to learn pressing problems teams are facing that I'm not aware of yet. I'm excited to share what I know about privacy in AI systems and explore what emerging research and trends are relevant. We'll be talking through real-life work problems with each other (under Chatham house rules).

If you are considering joining but need support to get funding approval from work, let me know. I can help you write a convincing argument for your boss.

If you can't read code, you can still join for theory, architecture and discussions, especially if your work interfaces with people in data science, AI, engineering and you want to know best practices. Technically an LLM can read the code for you...

If you are a student or recently out of work and need a larger discount, write me and I can see if I can help.

I can't wait to see some of you next week.

It's a pleasure to read from you; either by a quick reply or a postcard or letter to my PO Box:

Postfach 2 12 67 10124 Berlin Germany

Until next time!

With Love and Privacy, kjam

With Love and Privacy, kjam